It’s a neutral technology that doesn’t require the image to be CSAM-related in order to work.” “Pragmatically, there’s no reason why they couldn’t. “As soon as Microsoft brought it out for CSAM, they heard ‘Why not use it for terrorism?’” he says. I feel very confident this technology could be used to spy on people in other countries.”Īccording to Williams, the same concern was raised when Photo DNA was publicly licensed in 2014. “And if you look at the specifics of Edward Snowden’s revelations, it’s clear that our national security agencies may stick to certain rules in the US, but outside there are no rules at all. “This would allow agencies to spy on our phones to find, say, pictures that the Pentagon says compromise national security or belong to terrorists,” Wu tells Avast. There are “far less checks and balances” on behind the scenes deals between the US government and tech companies, in the name of national security, than the general public may believe. That’s the problem - the definition of ‘doing something wrong’ could be broadened.”īrianna Wu - a computer programmer, video game creator, online advocate, and Executive Director of Rebellion PAC who describes herself as “an Apple fan” - points out that the US government could theoretically create legislation giving them permission to use this technology without the general public ever knowing. And you have this little snooper on the device that’s just reading everything and checking it, not sending it to Apple unless you’re doing something wrong.

“It’s like we’re peeking over your shoulder, but we’re wearing sunglasses and saying the sunglasses can only see bad things.

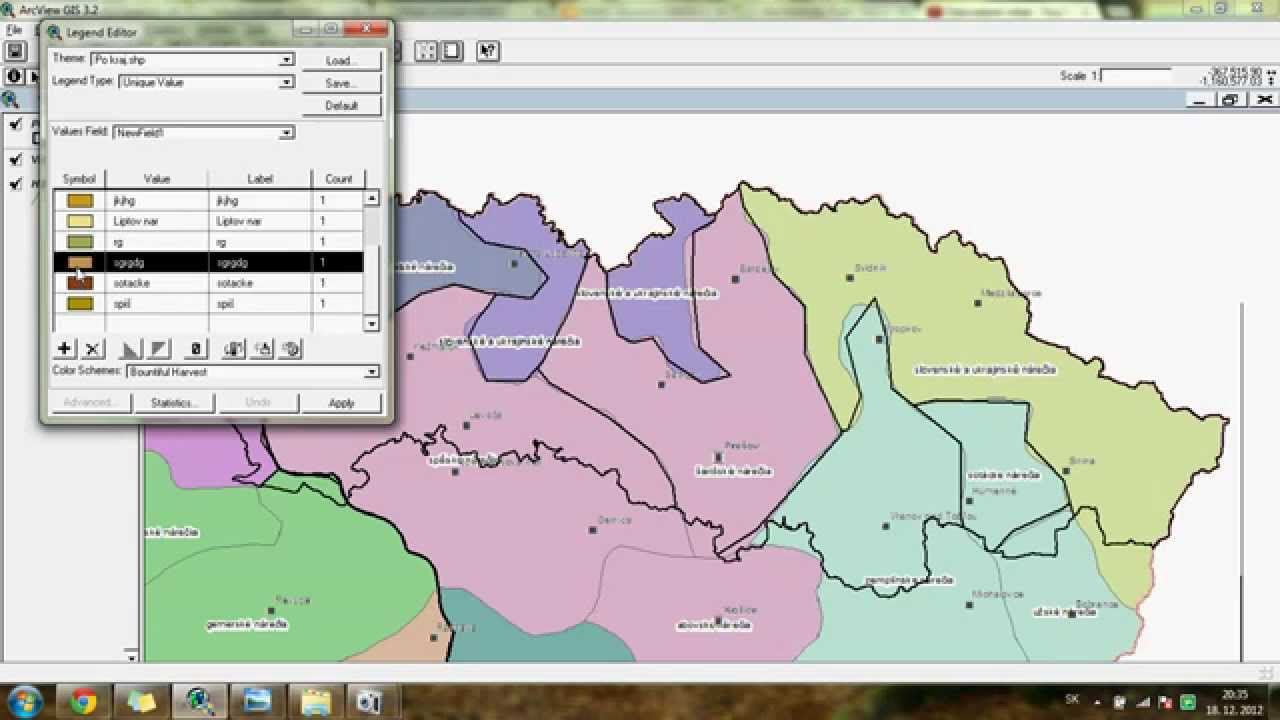

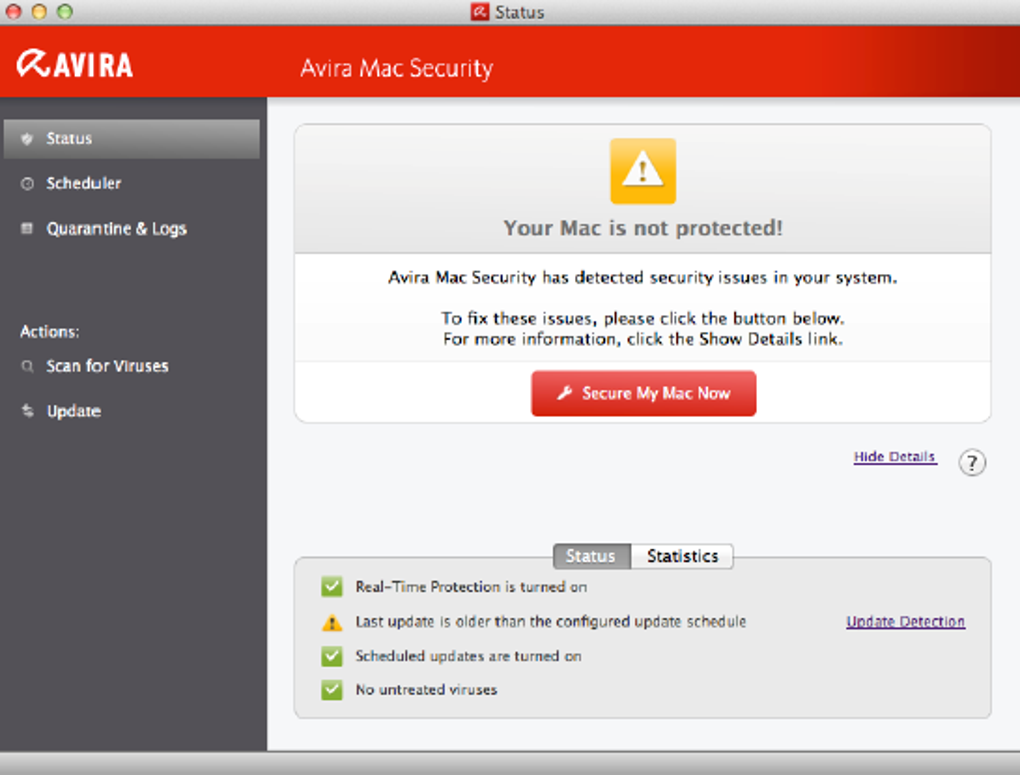

“Now that this is possible to have access, authorities will push for more access,” he says. McNamee questions whether companies should scan people’s devices at all. While combating CSAM is extremely important, privacy and security experts are concerned about the possible unintended consequences of this technology. So, from a privacy perspective, that’s technically a very pro-privacy way to go.” “It’s really minimizing the data you’re sending. “If you are going to check something on someone’s device, a really good way of doing that is to not pull that data off their phone and onto your servers,” McNamee says. That method, Avast Chief Privacy Officer Shane McNamee says, is the right one. NeuralHash processes the data on the users’ device, before it’s uploaded to iCloud. Apple is saying that if it’s above a certain percentage score, they’ll move on to the next review phase.” The potential unintended consequences of NeuralHash But if someone alters it - say they change it by cropping - then maybe it’s only a 70 percent match. “Say two images are completely identical - that’s a 100 percent match. “With threshold secret sharing, there’s some kind of scoring in place for an image,” Williams says. The technology, Avast Global Head of Security Jeff Williams says, sounds similar to the Photo DNA project that was developed during his time at Microsoft. And because editing an image traditionally changes a hash, Apple has added additional layers of scanning called “threshold secret sharing” so that “visually similar” images are also detected. Instead, it creates a string of numbers and letters - called a “hash” - for each image and then checks it against their database of known CSAM, according to reporting by TechCrunch. The scanning technology Apple is implementing - called NeuralHash - doesn’t compare images the way human eyes do.

The changes, which Apple says will be released in the US later this year, were first leaked via a tweet thread by a Johns Hopkins University cryptography professor who heard about them from a colleague. But could there be unintended consequences?Īpple is taking steps to combat child sex abuse materials (CSAM), including implementing technology that will detect known-CSAM uploaded to iCloud an iMessage feature that will alert parents if their child sends or receives an image with nudity and a block if someone tries to search for CSAM-related terms on Siri or Search.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed